The Median Tragedy

Bye-bye Average

We like to tell ourselves that artificial intelligence is a great equalizer and that it will lift everyone. That is, at least, what I believed as I started building my own company around AI.

What I am increasingly seeing instead, across leadership roles, university classrooms, and conversations with people who are growing up in the AI age, is a different pattern: a slow erosion of the middle. Especially in cognitive and white-collar work.

This is not a sudden collapse. It is a gradual shift, a quiet median tragedy, where average skills, average judgment, and average output is becoming eroded with a new shape of distribution of skills showing up.

And if you care about the future of society, this matters far more than it appears at first glance.

The Stable Average

For a long time, skill distribution in cognitive work was imperfect, but survivable.

Most people clustered around the middle. Some struggled and changed paths. Some were exceptional and stood out. But crucially, being average was enough to build a stable career and a decent life.

The same pattern existed in academia. A small group didn’t fit and dropped out. A large middle built solid careers. A few outliers pushed the boundaries of their fields.

Like any blog post, I’m oversimplifying, of course. Real distributions are messy, sometimes right-skewed, with a handful of truly extraordinary people and life isn’t as predictable as a bell curve.

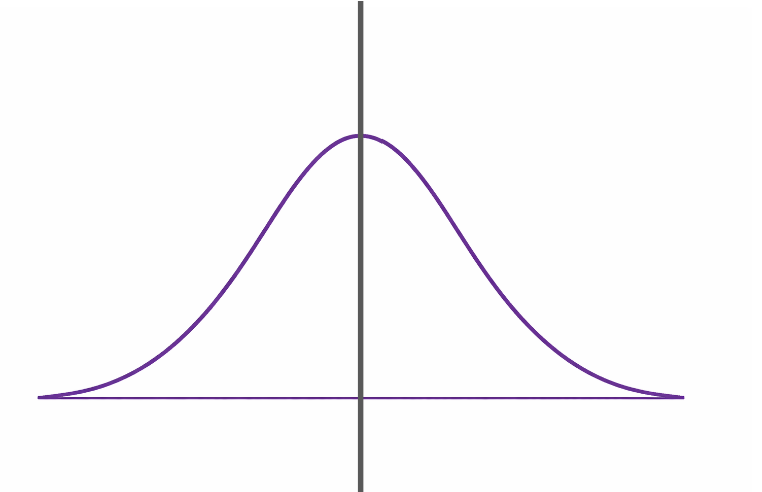

Still, for decades, most of us could look at our classmates or colleagues and recognize a familiar shape: something close to a normal distribution:

There was a wide middle: people who weren’t outstanding, but were competent enough to be useful, learn on the job, and improve gradually. You could think, deliver reasonably well, and earn your place, being relevant in an organization. Your work created economic value, which translated into a stable and viable life.

The middle acted as a buffer. It absorbed change.

AI is changing this massively.

The Distribution Split

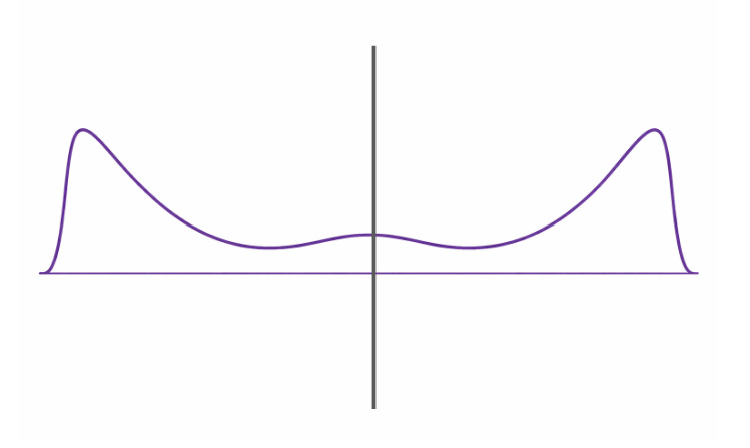

With AI, at first glance, it looks like everyone moves up. Tools get better, outputs improve, productivity rises. The curve seems to shift right.

But if you look closer (especially in university with students that are freely using AI), the curve is splitting.

Those who know how to think deeply, contextualize problems, and use AI as a counterweight to their own reasoning start compounding quickly moving towards the right of the distribution.

At the same time, another group starts drifting in the opposite direction. They still produce good output, but the thinking behind it thins out. The work looks fine. The understanding isn’t. And they cognitively offload their thinking to AI tools as this paper argues.

The middle is doomed.

How People Quietly Slide to the Left

What’s really worrying me is how subtle this change is. Nobody wakes up and decides to stop thinking.

It begins with convenience that these AI tools brings us. We’ve all been there in most of these scenarios:

AI drafts the emails entirely.

AI summarizes the report and makes us read something in 5 minutes that used to take 20 minutes.

AI does all code for us.

At first, it feels like leverage as we are being more productive. Then, without noticing, it becomes the replacement. Outputs are accepted because they sound right, not because they’ve been questioned.

And just like that, our attention to detail starts to erode. You see work that is structurally weak but stylistically polished. Decisions backed by fluent explanations that don’t survive a discussion.

How to Be on the Right Side of this Distribution

Is there something we can do to be on the right side of the distribution? Yes.

When built for augmentation, AI tools are useful in three main ways:

• Prototyping and rapid iteration

• Challenging your views and your work

• Handling low-cognition, high-friction tasks

What I see in students who use AI this way is that their work becomes more valuable. They do not trust outputs at face value. They treat them as drafts, hypotheses, and starting points.

They develop the ability to orchestrate tools and tasks, deciding what should be automated and what should not. Like factory leaders, they design systems rather than performing every task within them.

They are selective about productivity. They automate tasks that require little judgment (logging, formatting, organizing) while protecting the work that demands real thinking.

They also experiment deliberately. They do not fear AI tools, nor do they adopt them blindly. They test different tools and workflows, keeping what adds value and discarding what does not.

Large Scale Consequences

If this median tragedy continues unchecked, the consequences will not stay confined to individual careers or classrooms, and they will (already are) impacting all of us.

First, inequality widens sharply. Not just in income, but in agency. Those who know how to think, frame problems, and use AI as leverage will compound their advantage. Those who rely on AI as a substitute for thinking will slowly lose theirs. The gap between the “haves” and the “have-nots” will widen.

Second, we are engaging in massive cognitive offloading without understanding its long-term effects. Delegating memory, synthesis, judgment, and even curiosity to machines may feel efficient, but we do not yet know what it does to human capability at scale.

This is why AI cannot be treated as a convenience. It must be treated as a foundational skill (on the same level as mathematics, writing, and reasoning). Something that is taught explicitly, practiced deliberately, and assessed seriously.

If we fail to do this, the median tragedy will amplify forces that are already reshaping the world: polarization, instability, and structural inequality.

The real question is whether we choose to prepare people to think with these tools, or quietly accept a future where the middle disappears.

It's interesting how you've put words to something I've been noticing, especially teaching kids who'll inherit this. Your 'median tragedie' point is so spot on; it really makes you wonder if 'just good enough' is becoming an endangered species. Guess my students will need to be outliers, not just average, huh?